Ditch the Middlemen with UTCP

A fun, no-fluff guide to the Universal Tool Calling Protocol – slashing latency, simplifying integrations, and powering up your products with direct, wrapper-free magic (plus code snippets to prove it

Hey there, fellow product innovators! If you've ever wrestled with getting your AI agents to play nice with a mishmash of tools—think APIs, CLIs, or even those finicky WebSockets—this one's for you. We've all been there: launching a shiny new AI feature only to watch it stumble over integration hurdles, latency lags, or clunky wrappers that feel like duct tape on a spaceship. But here's the kicker: a fresh standard is flipping the script on how AI interacts with the world. Enter the Universal Tool Calling Protocol (UTCP), the sleek, no-nonsense protocol that's making tool calling as seamless as swiping on your favorite app.

In this post, we'll dive into what UTCP is, why it's a breath of fresh air compared to the old guard (looking at you, MCP), and how it can supercharge your next-gen products. Whether you're a PM dreaming up AI-driven experiences or just curious about the tech reshaping our tools, let's roll!

Quick Recap: The AI Tool Calling Headache

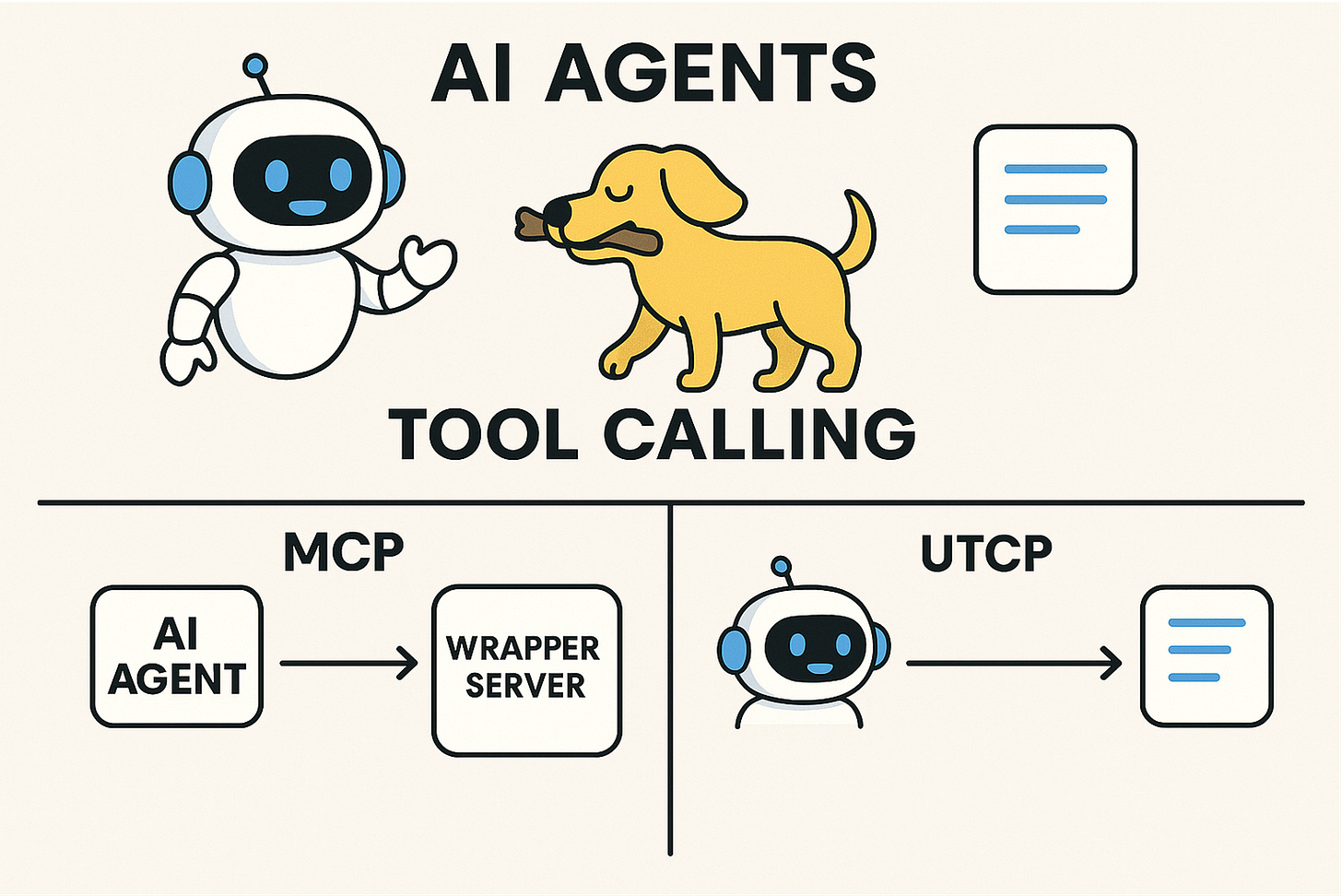

Remember the early days of AI agents? They were like eager puppies—full of potential but clueless about fetching the right stick. Tool calling emerged as the fix, letting models tap into external functions for real-world actions, like querying weather APIs or crunching data. But traditional approaches, like the Model Context Protocol (MCP), often relied on centralized wrapper servers. These act as middlemen, proxying calls and adding layers of complexity, latency, and potential failure points. It's like routing all your emails through a single, overworked post office—efficient in theory, but a bottleneck in practice.

UTCP? It's the direct express lane. Launched in mid-2025 as an open standard, it's designed to let any AI agent chat directly with any tool, no wrappers required. Think of it as a universal translator for tech protocols, empowering your agents to handle HTTP, gRPC, WebSockets, or even local CLIs without breaking a sweat.

The Four Pillars of UTCP: Decoding the Magic

At its core, UTCP is built on a simple yet powerful framework. Let's break it down like we would a customer journey—step by step, with real insights to boot.

Manuals: Your Agent's Instruction Manual

Imagine handing your AI a detailed playbook: "Here's the tool, here's how to call it, and here's the endpoint." That's a UTCP Manual—a standardized JSON-like format that describes tools in crystal-clear terms. It includes inputs (e.g., "location" for a weather query), outputs (e.g., "temperature" as a number), and the native protocol to use. No more guessing games; agents get the full scoop upfront.

Tools: The Building Blocks of Action

Tools are the stars here—individual functions like "get_weather" or "analyze_data." UTCP lets you define them flexibly, so your product can integrate anything from cloud APIs to on-device scripts. The beauty? Agents call them directly via their native interfaces, slashing latency and leveraging existing security like auth tokens or rate limits.

Providers: The Flexible Highways

Providers are the channels carrying the magic—HTTP for web calls, WebSockets for real-time chats, or CLI for local commands. UTCP's protocol-agnostic vibe means you can mix and match without rewriting code. It's like giving your AI a multi-tool Swiss Army knife instead of a single-purpose gadget.

Direct Calls: Bye-Bye, Middlemen

Unlike MCP's proxy-heavy setup, UTCP empowers agents to dial tools straight from the source. This isn't just faster; it's safer and more scalable. No single point of failure, no extra infrastructure costs—perfect for PMs scaling AI features without ballooning budgets.

Think of UTCP as the mental wrestling match for AI integration: two forces pushing for simplicity (direct calls and flexibility) versus the resistance of legacy wrappers. Tilt the odds in your favor, and boom—your AI products start singing.

Why UTCP is a Game-Changer: A FitTrack-Style Case Study

Let's make this tangible with a hypothetical nod to our fitness app from last time (shoutout to FitTrack fans!). Suppose you're building an AI coach that pulls real-time workout data from wearables, queries nutrition APIs, and even chats with a local CLI for user logs.

In the old world (MCP-style): You'd set up a wrapper server to proxy everything—adding dev time, potential downtime, and extra costs. Users complain about lag during peak workout hours, and your retention dips.

Enter UTCP: Define a Manual for each tool—HTTP for the nutrition API, WebSocket for live wearable feeds, CLI for local processing. Your AI agent registers the providers and calls directly. Result? Seamless, low-latency coaching that adapts on the fly. Users like Jake (our 15-pound-loser hero) get instant tips, switching from competitors because your app feels magically responsive.

The insight? UTCP isn't just tech jargon; it's a retention booster. By reducing friction in AI-tool interactions, you create stickier products that customers want to switch to—or never leave.

To really drive home the difference, let's peek under the hood with some simple code snippets. We'll use Python and a basic AI agent framework (like a simplified LangChain setup) to show how querying a nutrition API for calorie info might work in MCP versus UTCP. These are stripped-down examples for clarity—think of them as starting points you can expand.

MCP Approach: The Wrapper Way

In MCP, you need a central server to wrap and proxy calls. This adds an extra hop, which can slow things down and create a single point of failure.

First, set up the wrapper server (e.g., a simple Flask app running on your infrastructure):

# mcp_wrapper_server.py - Run this as a separate server

from flask import Flask, request, jsonify

import requests # For making the actual API call

app = Flask(__name__)

@app.route('/mcp/call_tool', methods=['POST'])

def call_tool():

data = request.json

tool_name = data['tool']

params = data['params']

if tool_name == 'get_nutrition':

# Proxy to the real API (e.g., a hypothetical nutrition service)

response = requests.get('<https://api.nutrition.example.com/calories>', params=params)

return jsonify(response.json())

return jsonify({'error': 'Tool not found'}), 404

if __name__ == '__main__':

app.run(host='0.0.0.0', port=5000)

Now, in your AI agent code, you call through the wrapper:

# ai_agent_with_mcp.py

import requests

def query_nutrition_via_mcp(food_item):

payload = {

'tool': 'get_nutrition',

'params': {'food': food_item}

}

response = requests.post('<http://your-wrapper-server:5000/mcp/call_tool>', json=payload)

if response.status_code == 200:

return response.json()['calories']

else:

return 'Error fetching data'

# Usage in FitTrack AI coach

calories = query_nutrition_via_mcp('apple')

print(f"Calories in an apple: {calories}") # Output: e.g., 52 (with potential lag from proxy)

Easy explanation: Here, the AI agent doesn't talk directly to the nutrition API. It sends a request to your MCP wrapper server, which then forwards it. This works, but if the server goes down or gets overloaded (say, during a fitness challenge surge), your whole AI coach grinds to a halt. Plus, every call adds network latency—think 200-500ms extra per query.

UTCP Approach: Direct and Snappy

With UTCP, no wrapper needed. You define a "Manual" (a JSON config) that tells the agent exactly how to call the tool directly, using its native protocol (HTTP in this case).

First, create the UTCP Manual (a simple JSON file or in-code dict):

# utcp_manual.py

nutrition_manual = {

"tool_name": "get_nutrition",

"description": "Fetch calorie info for a food item",

"protocol": "http",

"endpoint": "<https://api.nutrition.example.com/calories>",

"method": "GET",

"inputs": {

"food": {"type": "string", "required": True}

},

"outputs": {

"calories": {"type": "integer"}

}

}

Then, in your AI agent, load the manual and make a direct call:

# ai_agent_with_utcp.py

import requests

import json

# Load the manual (in a real setup, this could be fetched dynamically)

with open('utcp_manual.json', 'r') as f: # Assume we saved the manual as JSON

manual = json.load(f)

def query_nutrition_via_utcp(food_item):

if manual['tool_name'] == 'get_nutrition':

params = {'food': food_item}

response = requests.request(

method=manual['method'],

url=manual['endpoint'],

params=params

)

return response.json()['calories']

else:

return 'Tool not matched'

# Usage in FitTrack AI coach

calories = query_nutrition_via_utcp('apple')

print(f"Calories in an apple: {calories}") # Output: e.g., 52 (direct, low-latency)

Easy explanation: The AI agent uses the UTCP Manual like a cheat sheet to call the API straight—no middleman. This cuts out extra servers, reduces latency (often under 100ms), and makes scaling easier. If you add a WebSocket tool for wearables, just update the manual with "protocol": "websocket" and the endpoint—your agent handles it natively. For FitTrack, this means Jake gets calorie advice mid-run without waiting, keeping him engaged and loyal.

See the difference? MCP is like ordering takeout through a delivery app (extra fees and delays), while UTCP is cooking it yourself—faster, cheaper, and more control.

PM Power Move: Why You Should Care (and How to Get Started)

As product managers in the next-gen era, we're all about spotting trends that multiply value. UTCP shines here by:

Boosting Interoperability: Mix tools from any ecosystem without silos—ideal for hybrid AI apps.

Cutting Dev Overhead: No wrappers mean faster iterations and lower costs. Prototype in days, not weeks.

Enhancing Security and Scale: Leverage native protocols' built-ins, making your AI more robust for enterprise play.

Future-Proofing: With AI agents exploding (think autonomous workflows), UTCP positions your products at the forefront.

But what's driving you nuts about current tool calling? Is it the latency, the complexity, or the vendor lock-in? UTCP tackles all three, turning pain points into superpowers.

Your UTCP Cheat Sheet: Four Questions to Master It

Ready to level up? Here's your secret decoder ring—a quick cheat sheet to apply UTCP in your next sprint:

What's the Tool's Native Tongue? Identify the protocol (HTTP, WS, CLI) and define it in a Manual. Pro tip: Start simple with JSON schemas.

How Will Agents Find It? Set up a provider endpoint—use frameworks like FastAPI for quick wins.

Direct Call or Bust? Test for latency savings; aim for sub-100ms responses to wow users.

Scale Check: Future-Ready? Ensure it handles mixed protocols—your AI's growth depends on it.

Grab the open-source repo on GitHub or check utcp.io for docs. Experiment, iterate, and watch your AI features soar.

There you have it—UTCP demystified and ready to rock your roadmap. If this sparked ideas for your products, drop a comment below or subscribe for more deep dives into next-gen tech. What's your take: game-changer or just hype? Let's chat in the comments. Until next time, keep innovating! 🚀